Fans of Amnesty International and proponents of artificial insemination are probably growing increasingly alarmed at the rate at which their favourite acronym is being usurped by the tech industry. Artificial Intelligence, or AI, is the loosely defined buzzword of the hour. In fairness to Nvidia, it is one of the companies at the forefront of developing and accelerating AI, especially in the field of deep learning.

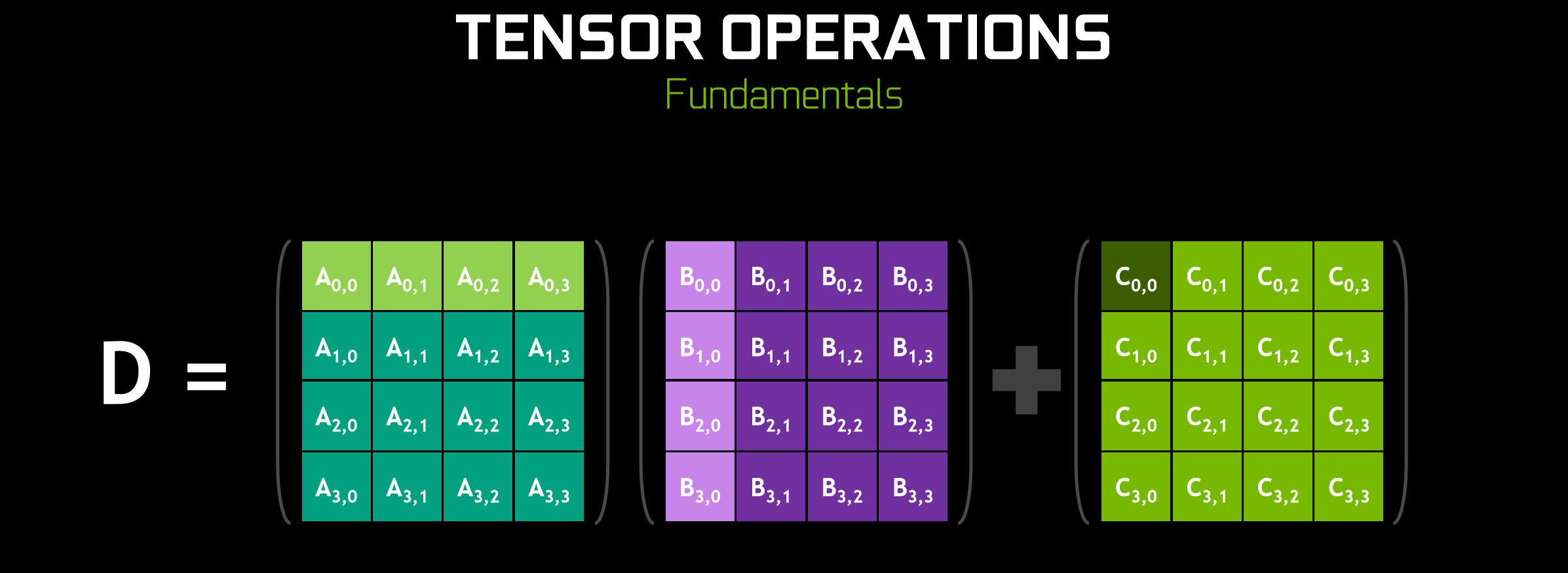

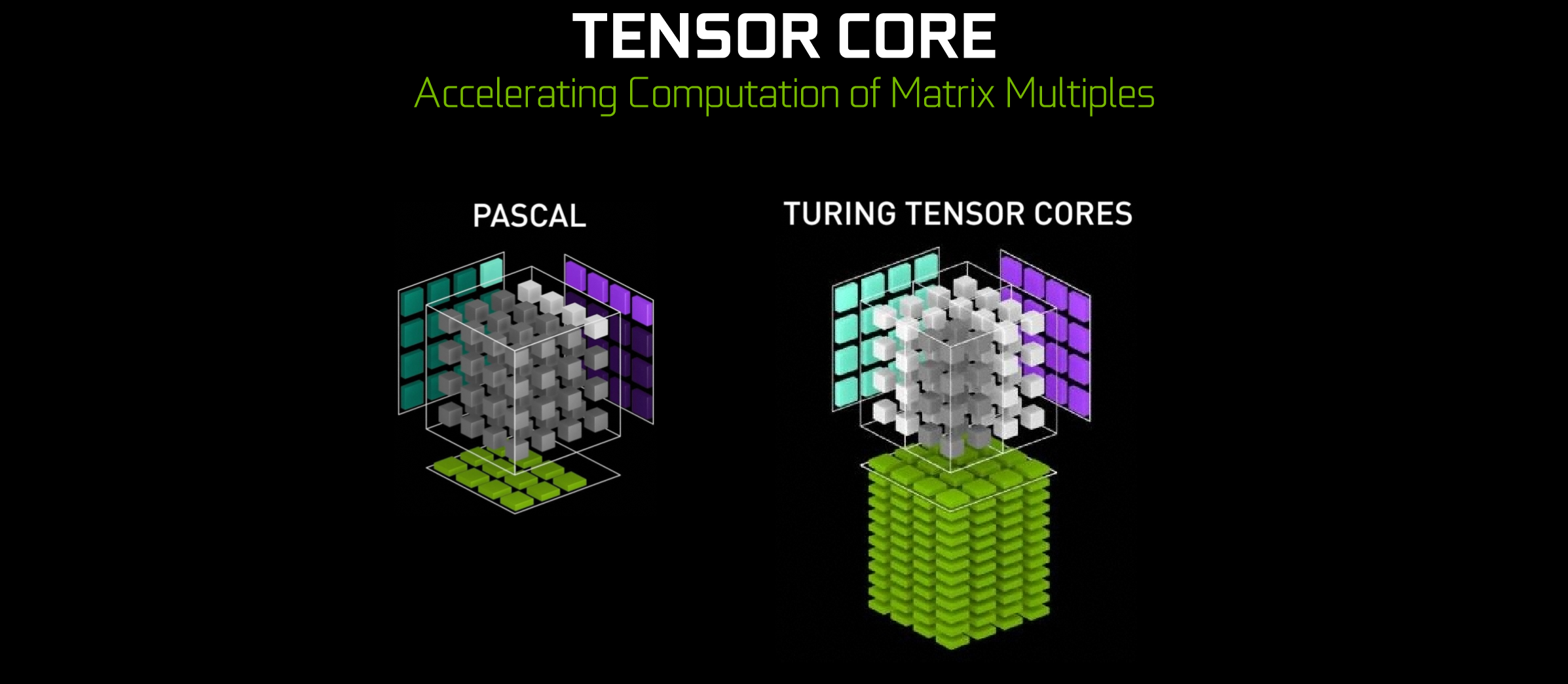

Remember how the redesigned Turing SMs sport eight Tensor Cores apiece? Well, Tensor Cores – introduced to the world with the Nvidia Volta architecture and enhanced here with low-precision modes suitable for their intended uses – are another piece of the Turing puzzle. In the field of deep learning, it so happens that a fairly common mathematical problem that needs solving is a matrix-based fused multiply-add (FMA) operation, whereby one 4x4 matrix is multiplied by another, the product of which is added to a third (a*b+c). Tensor Cores are simply cores that make this specific process very fast.

How very fast? Eight times faster than Pascal at the SM level, and 12x faster when comparing TU102 to GP102 since there are 1.5 times as many SMs as well. Those are certainly good numbers, but hang on a minute, what relevance does any of this have to gaming? After all, Tensor Cores might be great for deep learning workloads, but they’re taking up valuable transistors and power headroom that could’ve been given over to additional CUDA cores for all-purpose shading.

The answer, claims Nvidia, lies in inferencing i.e. the use of data gathered from a trained neural network to do Very Cool Stuff like filling in missing image data or driving cars. Training a neural network is computationally very expensive, but it’s something Nvidia is set up to do. Once a neural network is trained, you can “compress” the computation it does to something much smaller that consumer hardware (a single GPU perhaps!) can easily use to infer how to do said cool tasks with convincing accuracy.

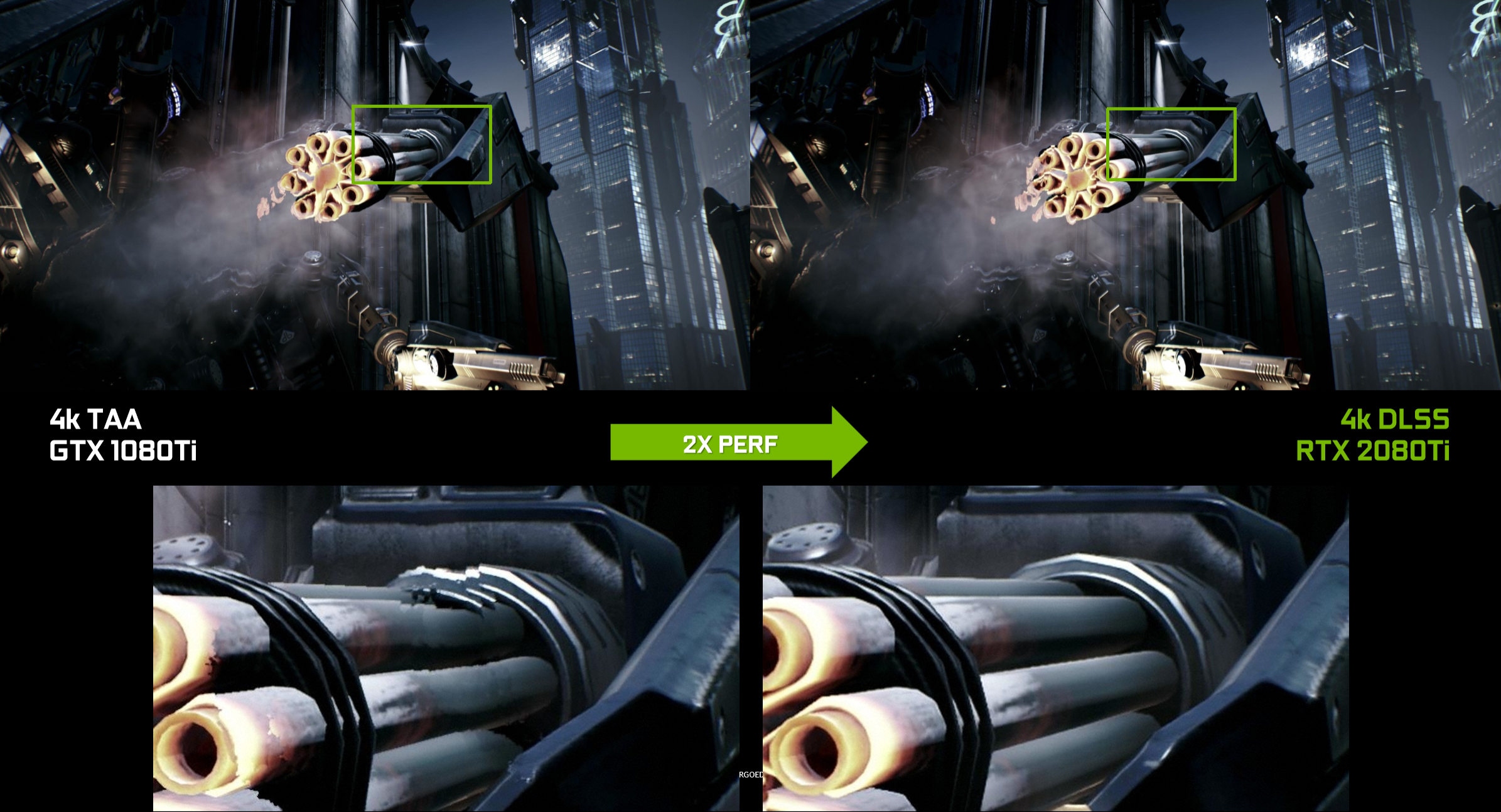

‘For god’s sake, bit-tech, just tell me if this is going to make my games better; my tea is cold now, I don’t buy GPUs to drive my cars, and I’m losing the will to live.’ Okay, okay. When it comes to gaming, Nvidia will be using Tensor Cores for a new technique called Deep Learning Super Sampling (DLSS), essentially a post-processing, AI-enhanced image quality enhancement tool that can leverage Nvidia’s ability to train neural networks. So the answer is yes, Tensor Cores can make games better looking, but only in specific primed games and at a performance cost which remains unknown.

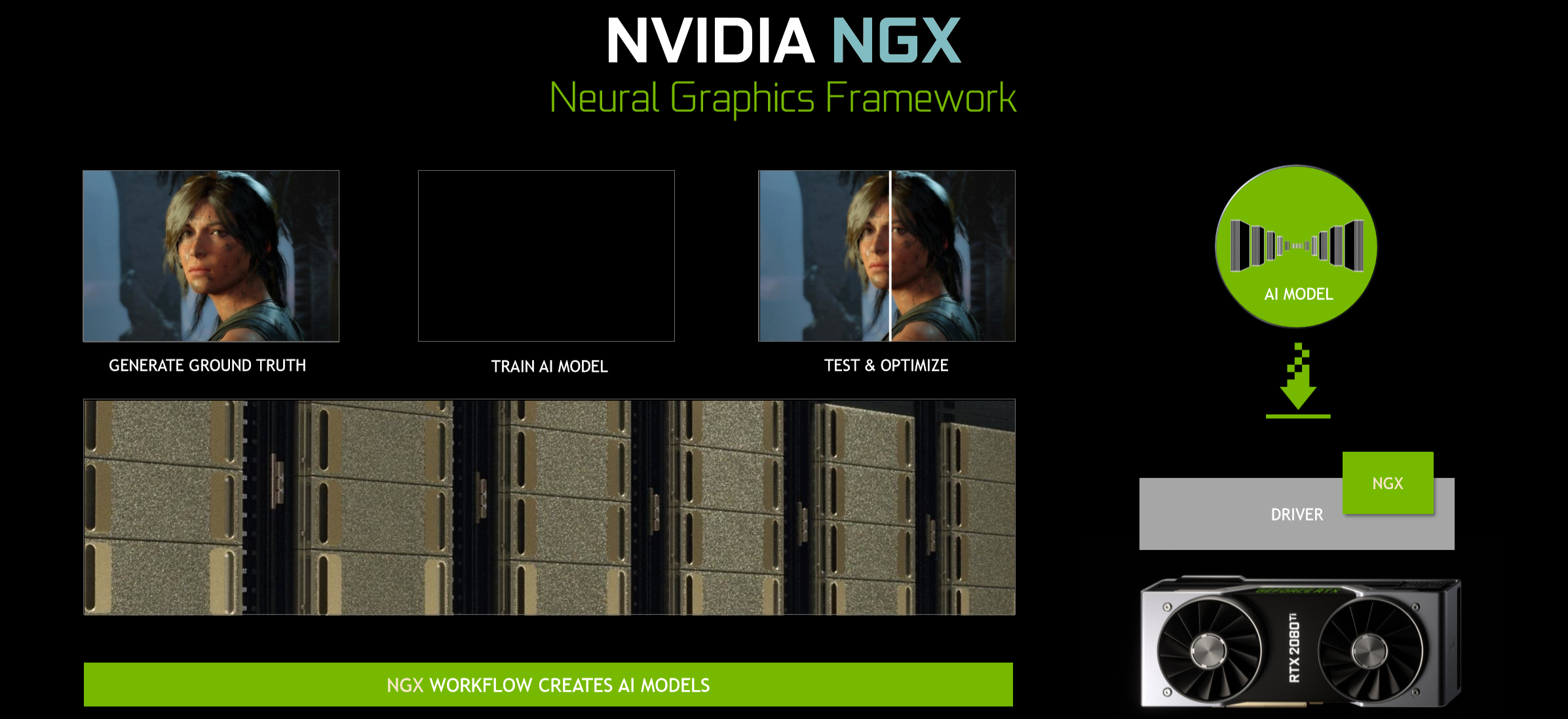

With DLSS, Nvidia can train its neural networks by collecting thousands of “ground truth” reference images rendered at 64x supersampling (64xSS) where each pixel is sampled not once but 64 times, vastly improving the accuracy of its shading and producing essentially a set of perfect frames – the network outputs. Matching frames are then rendered normally (one sample per pixel) – the network inputs. With a sprinkle of clever algorithms, the DLSS network then starts to measure the differences between the inputs and outputs with a particular focus on preserving detail while suppressing aliasing artifacts. It then feeds this data back into itself repeatedly, and eventually learns to avoid problems with blurring, disocclusion, and transparency that temporal-based anti-aliasing solutions suffer from.

The compressed form of this trained neural network can then be delivered via GeForce Experience to users with installations of supported games, thus enabling it as a graphics option. Nvidia claims to have measured a doubling of performance using DLSS with RTX 2080 Ti compared to Temporal Anti-Aliasing with GTX 1080 Ti, with results that are ‘similar’ in image quality. The RTX 2080 Ti is a faster card, of course, but not 2x fast, so this speed up is what Nvidia is betting on by including Tensor Cores.

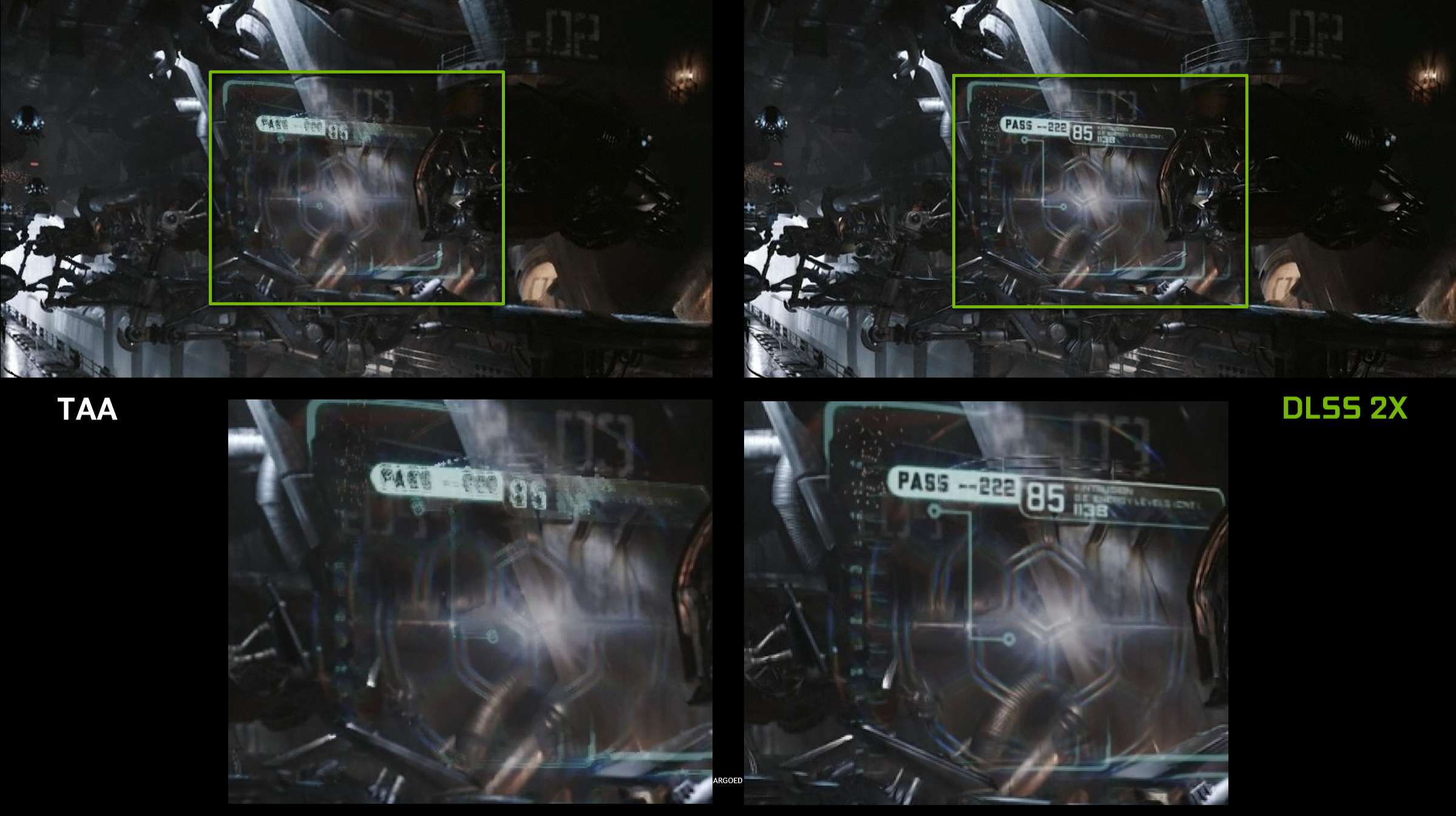

There’s also a higher quality DLSS 2X mode, where the network is trained to produce images as close to 64xSS as possible without actually having to sample every pixel 64 times., leading to results that are hard to distinguish (according to Nvidia). This is likely to impact performance in a big way, with Nvidia reckoning on an equivalent of 4x or even 8x MSAA, but the visual fidelity should be impressive.

At the time of writing, 25 titles are confirmed as having DLSS support incoming. Nvidia also claimed in a presentation that it can integrate some games into its neural networks in under a day. Sadly, it doesn’t look like any will be ready in time for us to test DLSS out at launch, so it’s something we’ll need to return to.

One final note – DLSS is simply one element of something Nvidia has termed NGX (Neural Graphics Acceleration), a deep learning-based technology stack exclusive to Turing. All features are managed by GeForce Experience, and other tools will include InPainting (filling in or replacing image content accurately), AI Slow Mo (interpolating regular video to produce convincing slow motion), and AI Super Rez (sharpening images and increasing their resolution), all of which will be leveraging the new Tensor Cores.

MSI MPG Velox 100R Chassis Review

October 14 2021 | 15:04

Want to comment? Please log in.